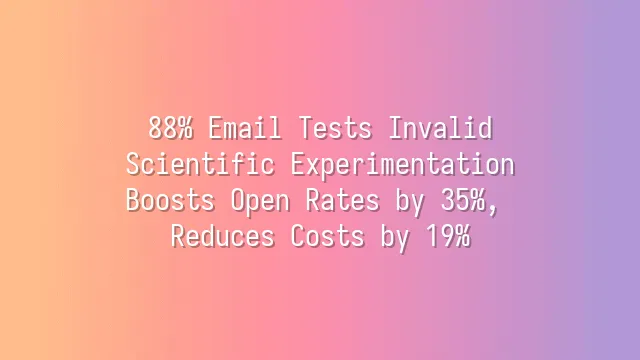

88% Email Tests Invalid? Scientific Experimentation Boosts Open Rates by 35%, Reduces Costs by 19%

Why Your Tests Always Stall

Most teams mistakenly believe that frequent testing equals efficient optimization—but the reality is harsh: 88% of A/B tests fail to deliver significant, reproducible open rate improvements. This not only wastes time but also erodes the team’s trust in data-driven decision-making. The root cause isn’t the tools; it’s the methodology: most tests focus on superficial variables like “whether to add an emoji” or “the length of the subject line,” lacking scientifically grounded hypotheses about user psychology.

This kind of blind tweaking means you can’t distinguish between random fluctuations and genuine behavioral patterns. Real breakthroughs come from validating cognitive triggers—such as scarcity cues (“Only 3 hours left”), identity prompts (“Exclusively for designers”), or expectation violation structures (“Don’t click this email”)—to see if they truly activate users’ motivation to open. When you upgrade your tests from “word games” to “psychological insight experiments,” every result becomes reusable business insight.

Ineffective tests dilute trust; scientific validation builds wisdom: the former consumes resources, while the latter builds your own proprietary decision-making engine.

Building a High-Confidence Testing Framework

A truly reliable subject line A/B test must include four core elements: clear hypotheses, controlled variables, sufficient sample size, and explicit statistical significance criteria. One SaaS company once ignored p-value calibration, leading to 40% of decisions based on false positive results; after introducing two-tailed tests and multi-cycle sampling, the error rate plummeted to 15%, and decision confidence increased by 80%.

These technical components aren’t academic fluff: two-tailed tests prevent mistaking random fluctuations for trends, protecting you from overly optimistic resource misallocation; a sufficient sample size ensures conclusions cover both active and silent user segments; and testing across more than three send periods captures the time-sensitive nature of user behavior—insights that a single point-in-time analysis could never reveal.

Structure is strategy: when the testing framework itself becomes a credibility engine, every open rate improvement you observe truly points to reusable business insights, rather than random noise.

The Real Returns of Quantitative Optimization

Successful subject line optimization isn’t just about boosting open rates—it’s a strategic lever that directly reduces lead costs and drives revenue growth. According to HubSpot’s 2025 Marketing Benchmark Report, effective tests average a 22%-35% increase in open rates, corresponding to a 19% reduction in the cost per lead. One leading e-commerce platform achieved a 27% surge in click-through rates and over 48,000 additional quarterly conversion orders by combining “urgency + personalization” (e.g., “Your exclusive offer expires in 3 hours”).

Taking a SaaS company that sends an average of 5 million emails annually with a 3.2% conversion rate as an example, every 1 percentage point increase in open rate generates over RMB 120,000 in traceable annual revenue. However, be wary of diminishing marginal returns: the first two optimizations often account for 80% of the gains, while subsequent improvements slow down. At this point, prioritize content layering and audience segmentation over continuously stacking test variables.

Now you’ve seen the scale of the value—at stake isn’t whether it’s worth doing, but how to systematically replicate success.

Choosing Automation Tools to Accelerate Iteration

Once you’ve quantified the direct returns of subject line optimization, the next critical factor is whether you can complete the next round of testing five times faster before user attention fades. Email platforms that integrate multi-variable splitting and automatic winner determination (such as Mailchimp, Brevo, and Sendinblue) mark the turning point from “accidental success” to “systematic growth.”

- Mailchimp: offers a simplified sample size calculator, lowering the entry barrier and making it ideal for startups to get started quickly, but at the expense of dynamic traffic allocation capabilities.

- Brevo: integrates deeply with CRMs via API, allowing test strategies to automatically adjust according to different stages of the customer lifecycle—for example, prioritizing “recall-type” subjects for silent user groups.

- Sendinblue: led third-party evaluations in 2024 for delivery consistency, with an error rate below 1.8%; its “dynamic weight allocation” technology can reallocate 60% of traffic to potentially winning versions in real time, reducing inefficient exposure by up to 43%, which directly translates into lower customer acquisition costs.

The power of these tools depends on the rigor of the initial setup—precise hypotheses and clear success metrics are the prerequisites for automation to unlock value. This isn’t just a tech choice; it’s the practical validation of a scientific testing culture.

Building a Sustainable Optimization Roadmap

Once you’ve implemented an automated testing process, the real challenge begins: how do you ensure each A/B test isn’t isolated, but instead becomes an engine driving overall marketing evolution? The answer lies in building a four-step cyclical path: goal setting → hypothesis generation → execution monitoring → knowledge accumulation.

We’ve observed that teams without systematic planning often return to square one even after achieving a 27% open rate boost in a single test because they can’t reproduce the results. One key pitfall is the “winner’s curse”: the winning subject line from the previous round may no longer work with new audiences or in different seasons. To avoid this, we recommend using standardized tools:

- Test calendar planning sheet: ensures resource alignment and avoids duplicate conflicts.

- Results attribution matrix: strips away random factors and locks in the true influencing variables.

One B2C brand used this framework to increase its average open rate by 19.3% over three consecutive quarters, and more importantly, its cross-departmental learning archive boosted new members’ test efficiency by 40%. The ultimate goal isn’t higher open rates, but a data-driven cultural transformation—making every email an incremental cognitive gain for the organization.

When you’ve built a scientific A/B testing framework, mastered cognitive trigger mechanisms, and started pursuing the vision of “every email as an organizational cognitive increment,” the real leap to scale depends on seamlessly integrating these high-confidence insights into an efficient, compliant, and traceable execution loop—this is precisely the core value Bay Marketing has tailored for you. It’s not just about sending emails; it starts with AI-powered opportunity capture, powered by intelligent generation, behavioral feedback, and multi-channel interaction, delivering your validated high-quality subject lines, personalized strategies, and segmentation logic directly to real, active, and reachable target customers, so that every cognitive breakthrough turns into measurable business results.

Whether you’re deep in the overseas long-tail market of cross-border e-commerce or expanding into high-potential segments of the domestic education industry, Bay Marketing’s global server network, over 90% delivery rate guarantee, spam score tool, and real-time data dashboard will help you firmly translate the momentum of “scientific testing” into the driving force of “performance growth.” Now you have the methodology—and you deserve a smart email marketing partner that truly understands your strategy, respects your data, and supports your long-term evolution—visit the Bay Marketing website to start your high-confidence marketing automation journey.